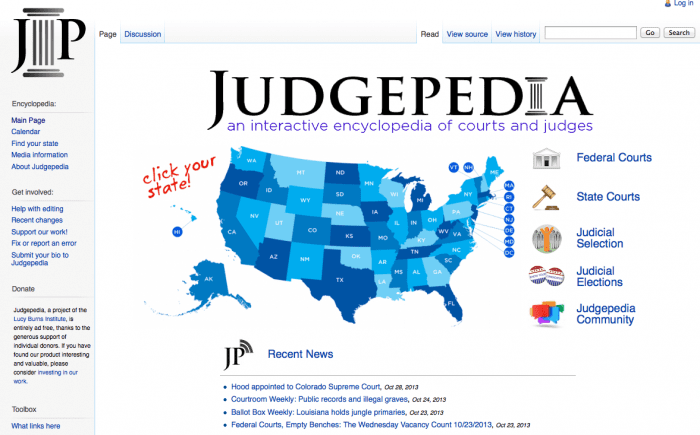

I just found out about Judgepedia, a site that collects information about courts and judges, in a shared wiki. Its primary user seems to be someone interested in how the judicial system in the US works, and how individual jurisdictions have established judicial systems. It’s a project out of the Lucy Burns Institute.

Judgepedia aims to provide greater clarity to citizens about who runs the courts, how much money they spend, how elections are run, and how the system operates.

It lets the user get straight to their local court system & try to navigate through how it is run & who the relevant judges are.

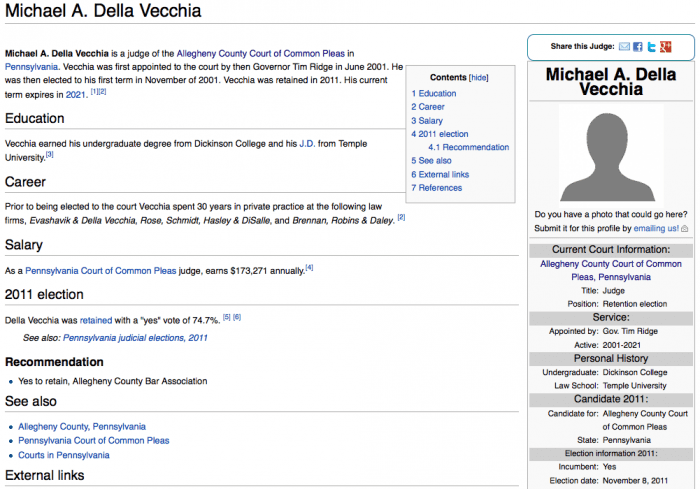

You can look up a brief bio & information on a particular judge.

This site is great in that it compiles disparate information that is available on lots of random sites around the web, and then creates a standard & searchable single experience for a user to more easily navigate. The standardization is a great benefit.

Still, there is lots of potential to expand from this basic educational information & get to a new service for consumers of court services. When I first saw the title Judgepedia, I was expecting more info for the legal user — for someone who will be encountering the judicial system and wanting to know how to deal with a certain judge.

That kind of product would provide stats & metrics about lawyers and judges — which seems like a forbidden territory in the legal domain. Even if such an evaluative product could be developed, it seems there would be serious vested interests that would block its implementation.

But still, that seems to be where the real need is.

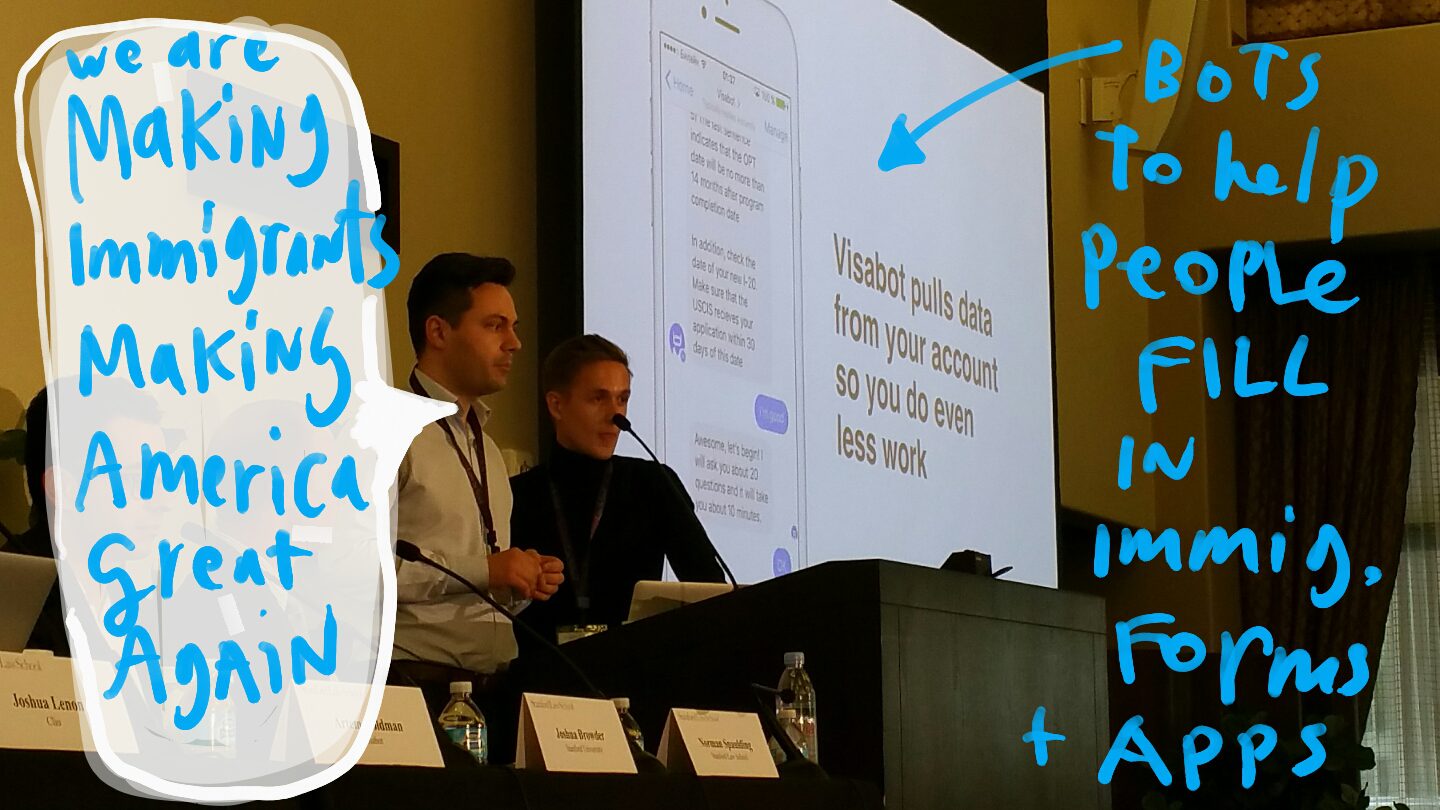

The more I study legal consumers, the more it becomes clear that a main unmet need of lawyers & clients is to build better strategies to get legal tasks done. This is where there is huge promise in crowdsourced information. If we can share information on what strategies work best within specific parts of the legal system & with certain judges — and which ones fail — this would be a huge benefit to litigants. Or if we could build a stats system that tracks judges’ behavior & preferences, this would similarly equip legal users with ways to better prepare their strategies.

The outstanding challenge, then, is how to gather, check, & share this crowdsourced information (which would be a huge undertaking in itself) — and to do it in a way that would survive challenges by lawyers, judges, and others who have a vested interest in resisting evaluation.

2 Comments

The Institute of Design at IIT worked on a project studying Self Representing Litigants and the Court Systems and came up with something similar (the idea not any actual implementation) called “Pursuit Evaluator”. Come to think of it, you might find the doc from that project very interesting …

http://www.kentlaw.iit.edu/Documents/Institutes%20and%20Centers/CAJT/access-to-justice-meeting-the-needs.pdf

It’s a little dense and written in design-speak, but it was the progenitor of A2J Author.

[…] Margaret Hagan of Stanford University has posted Judgepedia & crowdsourcing court-user info, at Open Law […]