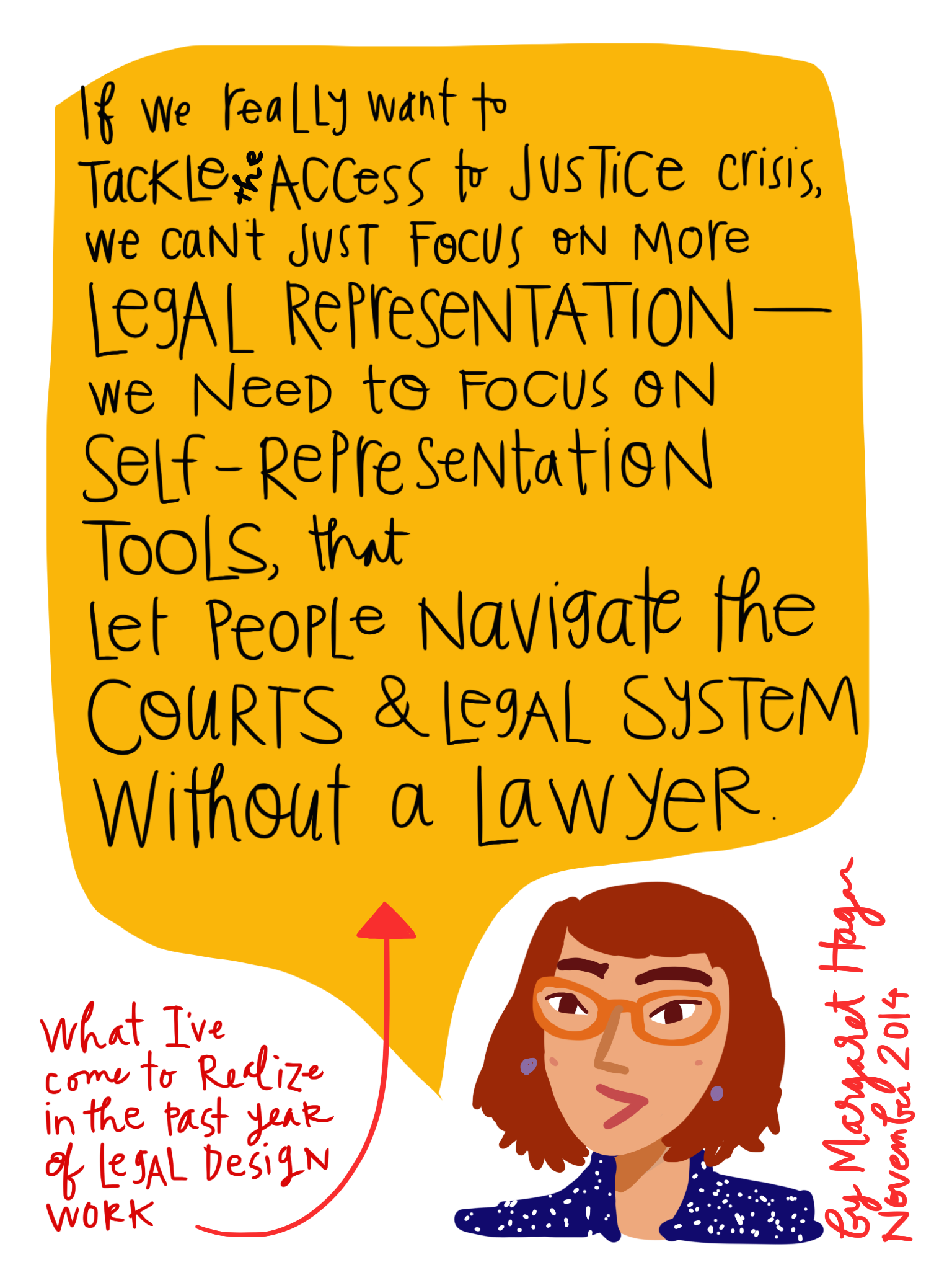

This year, my work at the Legal Design Lab is shifting from the strictly generative and experimental, to more evaluation and development. That means we’re not working so much on open design sprints, to spot new promising ideas, but rather more testing and refinement work – – to figure out which types of interventions are most worthwhile to build out and scale.

We began one of these user-led evaluation projects this past November, in a d.school class on Design for Justice: traffic courts. We took the ideas that had been generated in last Spring’s version of that class, and then used the November class to evaluate the different models — which ones most attracted users, and which ones provided the most benefit in their ability to comprehend their options to deal with their ticket and get an ‘ability to pay’ relief.

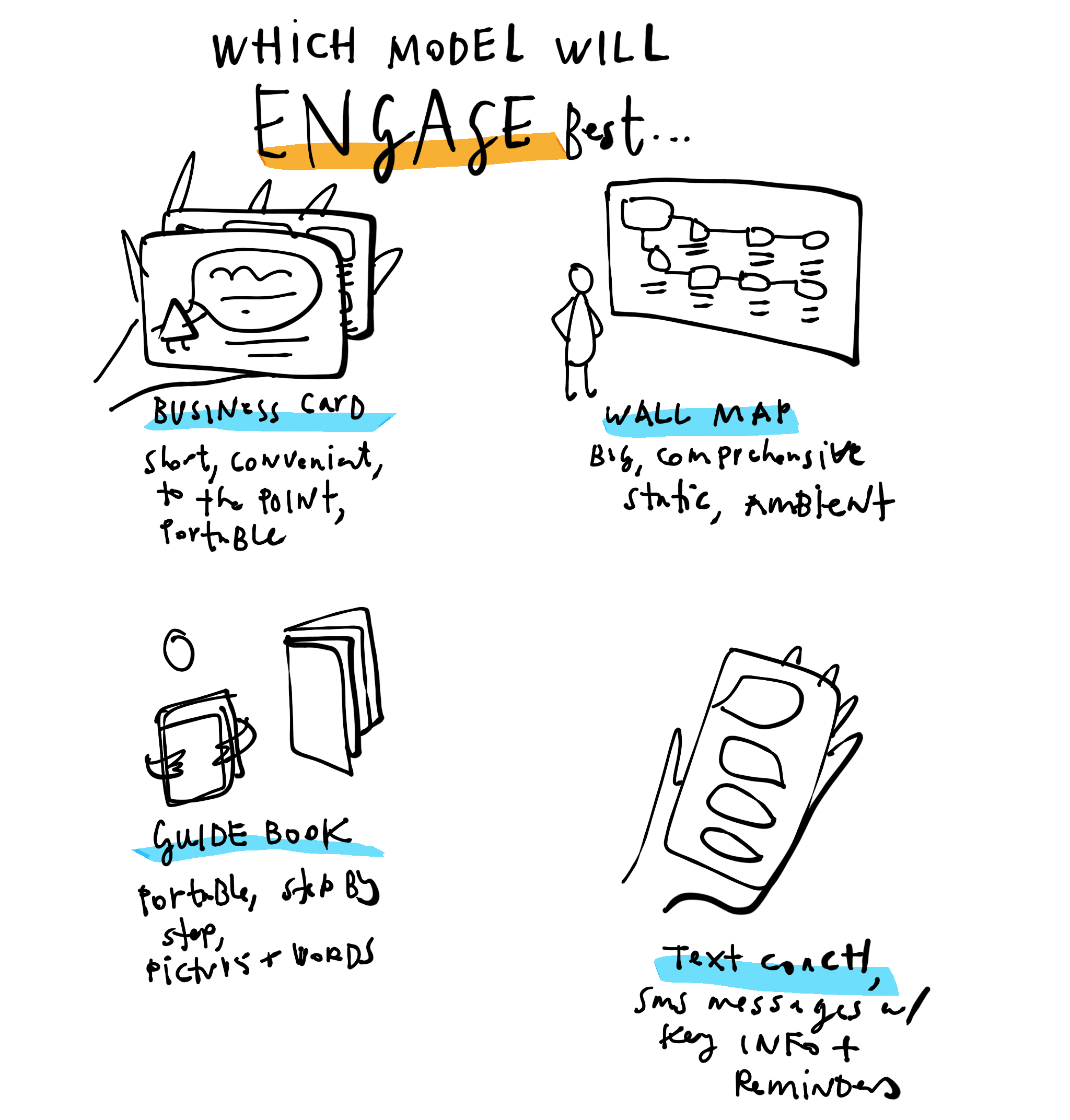

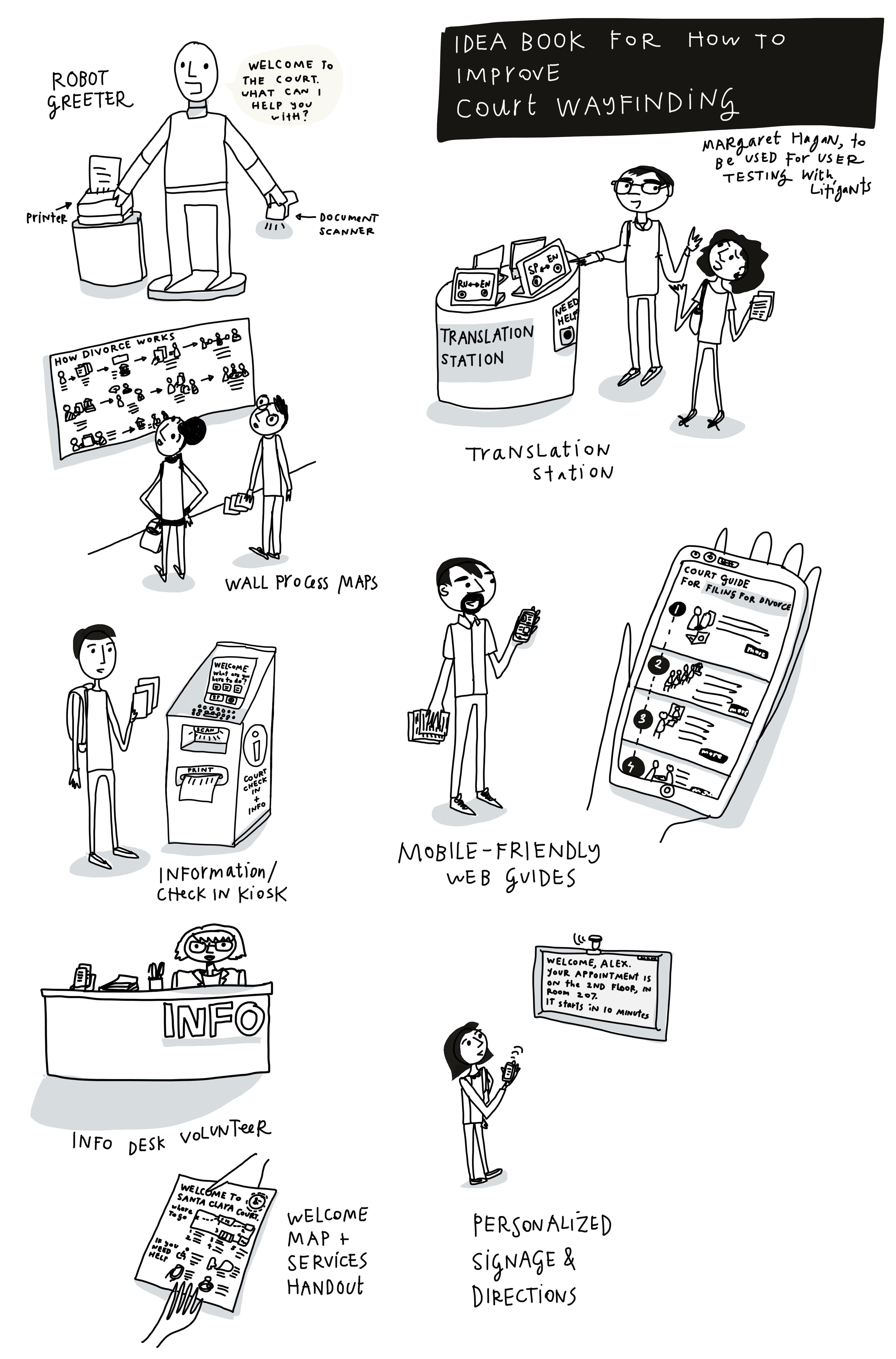

You can read more about our entire testing sessions and learnings at the class write-up here — and below see some of my planning notes, in which I started thinking through what we might do to evaluate the user-engagement of different access to justice interventions.