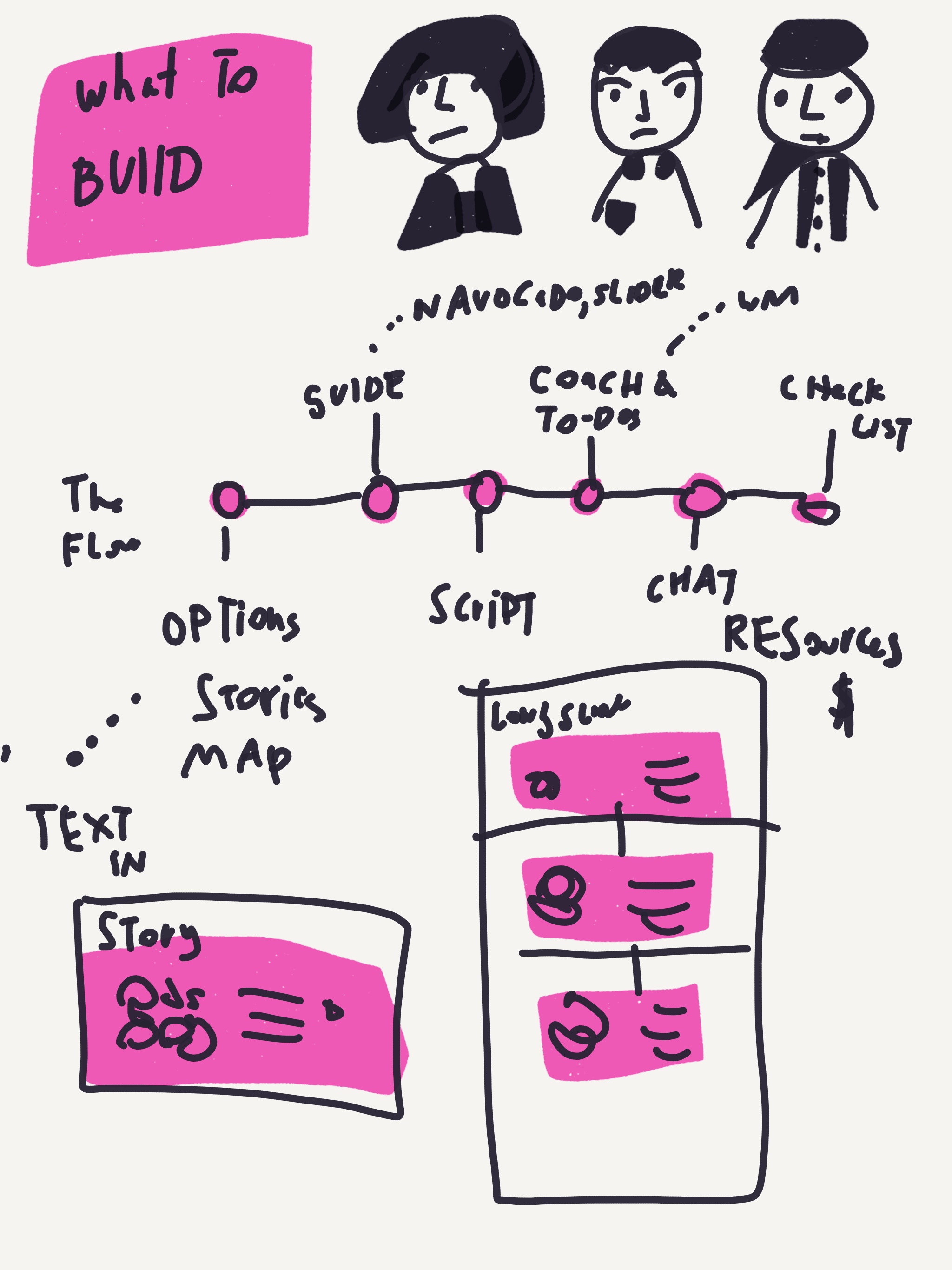

After doing a few months of user testing in courts, testing out different ideas for innovation — I’m more intent on chat-based, dialogue tools as a promising way to increase access to justice. This means we need to focus more resources on coming up with:

- hypotheses about what type of conversation and presentation work best for certain user personas + demographics

- does this change based on language, culture, age, etc?

- does this change by problem-area and level of stress/deadline

- hypotheses about what content can be conveyed through a bot or a chat — versus what cannot

- initial triage of the person’s problem

- welcoming, orienting, calming the person

- detailed triage of exactly what issues and scenario are occurring

- procedural steps to take

- checklists and summaries of what the process will be

- example stories, peer encouragement

- live chatting and coaching during a hearing or conversation

- other?

Once we draft these hypotheses, then we need to test out different versions of bots/other conversational tools in the lab and the field. There’s lots of different variables to test out — the type of script, the character/tone, the setting, and the user type. The good thing is that it will be relatively inexpensive to create these agents and refine them based on user behavior.

I am looking to define different evaluation tools right, with which we can measure the value of these new bots and chat tools. Some I am looking at are:

- Level of engagement: do people continue to use them? do they return to them? do they take follow up steps to continue on with them?

- Efficacy in legal process: do people who use them continue on the legal process correctly? do they take the next step? do they get the next step right?

- Efficacy in legal knowledge: do people who use them actually understand the law better, and become better able to navigate the process strategically? do they make wiser decisions?

- Quality of experience: do people who use them have a reduction in stress? Are they

If you are building bots or thinking about how best to evaluate them, please be in touch! I’d like to work with people who are open to experimenting with different strategies, and who are willing to share data about performance, engagement, etc.

1 Comment

Hi Margaret,

Really enjoy reading through your posts. To one of your questions on what type of content can be provided via chat bots to improve access to justice, I would imagine that providing users with direct links to documents or how to guides or helpful sites would engender more engagement with users.

Please feel free to reach out at the email provided. I’d be more than glad to help flesh out this idea.